Journal Description

Information

Information

is a scientific, peer-reviewed, open access journal of information science and technology, data, knowledge, and communication, and is published monthly online by MDPI. The International Society for Information Studies (IS4SI) is affiliated with Information and its members receive discounts on the article processing charges.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Ei Compendex, dblp, and other databases.

- Journal Rank: CiteScore - Q2 (Information Systems)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 18 days after submission; acceptance to publication is undertaken in 2.9 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.1 (2022);

5-Year Impact Factor:

2.9 (2022)

Latest Articles

Utilizing Machine Learning for Context-Aware Digital Biomarker of Stress in Older Adults

Information 2024, 15(5), 274; https://doi.org/10.3390/info15050274 (registering DOI) - 12 May 2024

Abstract

Identifying stress in older adults is a crucial field of research in health and well-being. This allows us to take timely preventive measures that can help save lives. That is why a nonobtrusive way of accurate and precise stress detection is necessary. Researchers

[...] Read more.

Identifying stress in older adults is a crucial field of research in health and well-being. This allows us to take timely preventive measures that can help save lives. That is why a nonobtrusive way of accurate and precise stress detection is necessary. Researchers have proposed many statistical measurements to associate stress with sensor readings from digital biomarkers. With the recent progress of Artificial Intelligence in the healthcare domain, the application of machine learning is showing promising results in stress detection. Still, the viability of machine learning for digital biomarkers of stress is under-explored. In this work, we first investigate the performance of a supervised machine learning algorithm (Random Forest) with manual feature engineering for stress detection with contextual information. The concentration of salivary cortisol was used as the golden standard here. Our framework categorizes stress into No Stress, Low Stress, and High Stress by analyzing digital biomarkers gathered from wearable sensors. We also provide a thorough knowledge of stress in older adults by combining physiological data obtained from wearable sensors with contextual clues from a stress protocol. Our context-aware machine learning model, using sensor fusion, achieved a macroaverage F-1 score of 0.937 and an accuracy of 92.48% in identifying three stress levels. We further extend our work to get rid of the burden of manual feature engineering. We explore Convolutional Neural Network (CNN)-based feature encoder and cortisol biomarkers to detect stress using contextual information. We provide an in-depth look at the CNN-based feature encoder, which effectively separates useful features from physiological inputs. Both of our proposed frameworks, i.e., Random Forest with engineered features and a Fully Connected Network with CNN-based features validate that the integration of digital biomarkers of stress can provide more insight into the stress response even without any self-reporting or caregiver labels. Our method with sensor fusion shows an accuracy and F-1 score of 83.7797% and 0.7552, respectively, without context and 96.7525% accuracy and 0.9745 F-1 score with context, which also constitutes a 4% increase in accuracy and a 0.4 increase in F-1 score from RF.

Full article

(This article belongs to the Special Issue From Data to Diagnosis: Recent Advances of Machine Learning in Biomedical and Health Informatics)

Open AccessArticle

Insights into Cybercrime Detection and Response: A Review of Time Factor

by

Hamed Taherdoost

Information 2024, 15(5), 273; https://doi.org/10.3390/info15050273 (registering DOI) - 12 May 2024

Abstract

Amidst an unprecedented period of technological progress, incorporating digital platforms into diverse domains of existence has become indispensable, fundamentally altering the operational processes of governments, businesses, and individuals. Nevertheless, the swift process of digitization has concurrently led to the emergence of cybercrime, which

[...] Read more.

Amidst an unprecedented period of technological progress, incorporating digital platforms into diverse domains of existence has become indispensable, fundamentally altering the operational processes of governments, businesses, and individuals. Nevertheless, the swift process of digitization has concurrently led to the emergence of cybercrime, which takes advantage of weaknesses in interconnected systems. The growing dependence of society on digital communication, commerce, and information sharing has led to the exploitation of these platforms by malicious actors for hacking, identity theft, ransomware, and phishing attacks. With the growing dependence of organizations, businesses, and individuals on digital platforms for information exchange, commerce, and communication, malicious actors have identified the susceptibilities present in these systems and have begun to exploit them. This study examines 28 research papers focusing on intrusion detection systems (IDS), and phishing detection in particular, and how quickly responses and detections in cybersecurity may be made. We investigate various approaches and quantitative measurements to comprehend the link between reaction time and detection time and emphasize the necessity of minimizing both for improved cybersecurity. The research focuses on reducing detection and reaction times, especially for phishing attempts, to improve cybersecurity. In smart grids and automobile control networks, faster attack detection is important, and machine learning can help. It also stresses the necessity to improve protocols to address increasing cyber risks while maintaining scalability, interoperability, and resilience. Although machine-learning-based techniques have the potential for detection precision and reaction speed, obstacles still need to be addressed to attain real-time capabilities and adjust to constantly changing threats. To create effective defensive mechanisms against cyberattacks, future research topics include investigating innovative methodologies, integrating real-time threat intelligence, and encouraging collaboration.

Full article

(This article belongs to the Special Issue Cybersecurity, Cybercrimes, and Smart Emerging Technologies)

►▼

Show Figures

Figure 1

Open AccessArticle

Control of Qubit Dynamics Using Reinforcement Learning

by

Dimitris Koutromanos, Dionisis Stefanatos and Emmanuel Paspalakis

Information 2024, 15(5), 272; https://doi.org/10.3390/info15050272 (registering DOI) - 11 May 2024

Abstract

The progress in machine learning during the last decade has had a considerable impact on many areas of science and technology, including quantum technology. This work explores the application of reinforcement learning (RL) methods to the quantum control problem of state transfer in

[...] Read more.

The progress in machine learning during the last decade has had a considerable impact on many areas of science and technology, including quantum technology. This work explores the application of reinforcement learning (RL) methods to the quantum control problem of state transfer in a single qubit. The goal is to create an RL agent that learns an optimal policy and thus discovers optimal pulses to control the qubit. The most crucial step is to mathematically formulate the problem of interest as a Markov decision process (MDP). This enables the use of RL algorithms to solve the quantum control problem. Deep learning and the use of deep neural networks provide the freedom to employ continuous action and state spaces, offering the expressivity and generalization of the process. This flexibility helps to formulate the quantum state transfer problem as an MDP in several different ways. All the developed methodologies are applied to the fundamental problem of population inversion in a qubit. In most cases, the derived optimal pulses achieve fidelity equal to or higher than 0.9999, as required by quantum computing applications. The present methods can be easily extended to quantum systems with more energy levels and may be used for the efficient control of collections of qubits and to counteract the effect of noise, which are important topics for quantum sensing applications.

Full article

(This article belongs to the Special Issue Quantum Information Processing and Machine Learning)

►▼

Show Figures

Figure 1

Open AccessArticle

Cyclic Air Braking Strategy for Heavy Haul Trains on Long Downhill Sections Based on Q-Learning Algorithm

by

Changfan Zhang, Shuo Zhou, Jing He and Lin Jia

Information 2024, 15(5), 271; https://doi.org/10.3390/info15050271 (registering DOI) - 11 May 2024

Abstract

►▼

Show Figures

Cyclic air braking is a key factor affecting the safe operation of trains on long downhill sections. However, a train’s cycle braking strategy is constrained by multiple factors such as driving environment, speed, and air-refilling time. A Q-learning algorithm-based cyclic braking strategy for

[...] Read more.

Cyclic air braking is a key factor affecting the safe operation of trains on long downhill sections. However, a train’s cycle braking strategy is constrained by multiple factors such as driving environment, speed, and air-refilling time. A Q-learning algorithm-based cyclic braking strategy for a heavy haul train on long downhill sections is proposed to address this challenge. First, the operating environment of a heavy haul train on long downhill sections is designed, considering various constraint parameters, such as the characteristics of special operating routes, allowable operating speeds, and train tube air-refilling time. Second, the operating status and braking operation of a heavy haul train on long downhill sections are discretized in order to establish a Q-table based on state–action pairs. The training of algorithm performance is achieved by continuously updating Q-tables. Finally, taking the heavy haul train formation as the study object, actual line data from the Shuozhou–Huanghua Railway are used for experimental simulation, and different hyperparameters and entry speed conditions are considered. The results show that the safe and stable cyclic braking of a heavy haul train on long downhill sections is achieved. The effectiveness of the Q-learning control strategy is verified.

Full article

Figure 1

Open AccessArticle

A Comparative Analysis of the Bayesian Regularization and Levenberg–Marquardt Training Algorithms in Neural Networks for Small Datasets: A Metrics Prediction of Neolithic Laminar Artefacts

by

Maurizio Troiano, Eugenio Nobile, Fabio Mangini, Marco Mastrogiuseppe, Cecilia Conati Barbaro and Fabrizio Frezza

Information 2024, 15(5), 270; https://doi.org/10.3390/info15050270 (registering DOI) - 10 May 2024

Abstract

This study aims to present a comparative analysis of the Bayesian regularization backpropagation and Levenberg–Marquardt training algorithms in neural networks for the metrics prediction of damaged archaeological artifacts, of which the state of conservation is often fragmented due to different reasons, such as

[...] Read more.

This study aims to present a comparative analysis of the Bayesian regularization backpropagation and Levenberg–Marquardt training algorithms in neural networks for the metrics prediction of damaged archaeological artifacts, of which the state of conservation is often fragmented due to different reasons, such as ritual, use wear, or post-depositional processes. The archaeological artifacts, specifically laminar blanks (so-called blades), come from different sites located in the Southern Levant that belong to the Pre-Pottery B Neolithic (PPNB) (10,100/9500–400 cal B.P.). This paper shows the entire procedure of the analysis, from its normalization of the dataset to its comparative analysis and overfitting problem resolution.

Full article

(This article belongs to the Special Issue Techniques and Data Analysis in Cultural Heritage)

►▼

Show Figures

Figure 1

Open AccessArticle

L-PCM: Localization and Point Cloud Registration-Based Method for Pose Calibration of Mobile Robots

by

Dandan Ning and Shucheng Huang

Information 2024, 15(5), 269; https://doi.org/10.3390/info15050269 - 10 May 2024

Abstract

The autonomous navigation of mobile robots contains three parts: map building, global localization, and path planning. Precise pose data directly affect the accuracy of global localization. However, the cumulative error problems of sensors and various estimation strategies cause the pose to have a

[...] Read more.

The autonomous navigation of mobile robots contains three parts: map building, global localization, and path planning. Precise pose data directly affect the accuracy of global localization. However, the cumulative error problems of sensors and various estimation strategies cause the pose to have a large gap in data accuracy. To address these problems, this paper proposes a pose calibration method based on localization and point cloud registration, which is called L-PCM. Firstly, the method obtains the odometer and IMU (inertial measurement unit) data through the sensors mounted on the mobile robot and uses the UKF (unscented Kalman filter) algorithm to filter and fuse the odometer data and IMU data to obtain the estimated pose of the mobile robot. Secondly, the AMCL (adaptive Monte Carlo localization) is improved by combining the UKF fusion model of the IMU and odometer to obtain the modified global initial pose of the mobile robot. Finally, PL-ICP (point to line-iterative closest point) point cloud registration is used to calibrate the modified global initial pose to obtain the global pose of the mobile robot. Through simulation experiments, it is verified that the UKF fusion algorithm can reduce the influence of cumulative errors and the improved AMCL algorithm can optimize the pose trajectory. The average value of the position error is about 0.0447 m, and the average value of the angle error is stabilized at about 0.0049 degrees. Meanwhile, it has been verified that the L-PCM is significantly better than the existing AMCL algorithm, with a position error of about 0.01726 m and an average angle error of about 0.00302 degrees, effectively improving the accuracy of the pose.

Full article

(This article belongs to the Section Artificial Intelligence)

►▼

Show Figures

Figure 1

Open AccessArticle

The Convergence of Artificial Intelligence and Blockchain: The State of Play and the Road Ahead

by

Dhanasak Bhumichai, Christos Smiliotopoulos, Ryan Benton, Georgios Kambourakis and Dimitrios Damopoulos

Information 2024, 15(5), 268; https://doi.org/10.3390/info15050268 - 9 May 2024

Abstract

Artificial intelligence (AI) and blockchain technology have emerged as increasingly prevalent and influential elements shaping global trends in Information and Communications Technology (ICT). Namely, the synergistic combination of blockchain and AI introduces beneficial, unique features with the potential to enhance the performance and

[...] Read more.

Artificial intelligence (AI) and blockchain technology have emerged as increasingly prevalent and influential elements shaping global trends in Information and Communications Technology (ICT). Namely, the synergistic combination of blockchain and AI introduces beneficial, unique features with the potential to enhance the performance and efficiency of existing ICT systems. However, presently, the confluence of these two disruptive technologies remains in a rather nascent stage, undergoing continuous exploration and study. In this context, the work at hand offers insight regarding the most significant features of the AI and blockchain intersection. Sixteen outstanding, recent articles exploring the combination of AI and blockchain technology have been systematically selected and thoroughly investigated. From them, fourteen key features have been extracted, including data security and privacy, data encryption, data sharing, decentralized intelligent systems, efficiency, automated decision systems, collective decision making, scalability, system security, transparency, sustainability, device cooperation, and mining hardware design. Moreover, drawing upon the related literature stemming from major digital databases, we constructed a timeline of this technological convergence comprising three eras: emerging, convergence, and application. For the convergence era, we categorized the pertinent features into three primary groups: data manipulation, potential applicability to legacy systems, and hardware issues. For the application era, we elaborate on the impact of this technology fusion from the perspective of five distinct focus areas, from Internet of Things applications and cybersecurity, to finance, energy, and smart cities. This multifaceted, but succinct analysis is instrumental in delineating the timeline of AI and blockchain convergence and pinpointing the unique characteristics inherent in their integration. The paper culminates by highlighting the prevailing challenges and unresolved questions in blockchain and AI-based systems, thereby charting potential avenues for future scholarly inquiry.

Full article

(This article belongs to the Special Issue Feature Papers in Information in 2023)

Open AccessArticle

Modeling- and Simulation-Driven Methodology for the Deployment of an Inland Water Monitoring System

by

Giordy A. Andrade, Segundo Esteban, José L. Risco-Martín, Jesús Chacón and Eva Besada-Portas

Information 2024, 15(5), 267; https://doi.org/10.3390/info15050267 - 9 May 2024

Abstract

In response to the challenges introduced by global warming and increased eutrophication, this paper presents an innovative modeling and simulation (M&S)-driven model for developing an automated inland water monitoring system. This system is grounded in a layered Internet of Things (IoT) architecture and

[...] Read more.

In response to the challenges introduced by global warming and increased eutrophication, this paper presents an innovative modeling and simulation (M&S)-driven model for developing an automated inland water monitoring system. This system is grounded in a layered Internet of Things (IoT) architecture and seamlessly integrates cloud, fog, and edge computing to enable sophisticated, real-time environmental surveillance and prediction of harmful algal and cyanobacterial blooms (HACBs). Utilizing autonomous boats as mobile data collection units within the edge layer, the system efficiently tracks algae and cyanobacteria proliferation and relays critical data upward through the architecture. These data feed into advanced inference models within the cloud layer, which inform predictive algorithms in the fog layer, orchestrating subsequent data-gathering missions. This paper also details a complete development environment that facilitates the system lifecycle from concept to deployment. The modular design is powered by Discrete Event System Specification (DEVS) and offers unparalleled adaptability, allowing developers to simulate, validate, and deploy modules incrementally and cutting across traditional developmental phases.

Full article

(This article belongs to the Special Issue Internet of Things and Cloud-Fog-Edge Computing)

►▼

Show Figures

Figure 1

Open AccessArticle

Fake User Detection Based on Multi-Model Joint Representation

by

Jun Li, Wentao Jiang, Jianyi Zhang, Yanhua Shao and Wei Zhu

Information 2024, 15(5), 266; https://doi.org/10.3390/info15050266 - 9 May 2024

Abstract

The existing deep learning-based detection of fake information focuses on the transient detection of news itself. Compared to user category profile mining and detection, transient detection is prone to higher misjudgment rates due to the limitations of insufficient temporal information, posing new challenges

[...] Read more.

The existing deep learning-based detection of fake information focuses on the transient detection of news itself. Compared to user category profile mining and detection, transient detection is prone to higher misjudgment rates due to the limitations of insufficient temporal information, posing new challenges to social public opinion monitoring tasks such as fake user detection. This paper proposes a multimodal aggregation portrait model (MAPM) based on multi-model joint representation for social media platforms. It constructs a deep learning-based multimodal fake user detection framework by analyzing user behavior datasets within a time retrospective window. It integrates a pre-trained Domain Large Model to represent user behavior data across multiple modalities, thereby constructing a high-generalization implicit behavior feature spectrum for users. In response to the tendency of existing fake user behavior mining to neglect time-series features, this study introduces an improved network called Sequence Interval Detection Net (SIDN) based on Sequence to Sequence (seq2seq) to characterize time interval sequence behaviors, achieving strong expressive capabilities for detecting fake behaviors within the time window. Ultimately, the amalgamation of latent behavioral features and explicit characteristics serves as the input for spectral clustering in detecting fraudulent users. The experimental results on Weibo real dataset demonstrate that the proposed model outperforms the detection utilizing explicit user features, with an improvement of 27.0% in detection accuracy.

Full article

(This article belongs to the Special Issue 2nd Edition of Information Retrieval and Social Media Mining)

►▼

Show Figures

Figure 1

Open AccessArticle

Detection of Korean Phishing Messages Using Biased Discriminant Analysis under Extreme Class Imbalance Problem

by

Siyoon Kim, Jeongmin Park, Hyun Ahn and Yonggeol Lee

Information 2024, 15(5), 265; https://doi.org/10.3390/info15050265 (registering DOI) - 7 May 2024

Abstract

In South Korea, the rapid proliferation of smartphones has led to an uptick in messenger phishing attacks associated with electronic communication financial scams. In response to this, various phishing detection algorithms have been proposed. However, collecting messenger phishing data poses challenges due to

[...] Read more.

In South Korea, the rapid proliferation of smartphones has led to an uptick in messenger phishing attacks associated with electronic communication financial scams. In response to this, various phishing detection algorithms have been proposed. However, collecting messenger phishing data poses challenges due to concerns about its potential use in criminal activities. Consequently, a Korean phishing dataset can be composed of imbalanced data, where the number of general messages might outnumber the phishing ones. This class imbalance problem and data scarcity can lead to overfitting issues, making it difficult to achieve high performance. To solve this problem, this paper proposes a phishing messages classification method using Biased Discriminant Analysis without resorting to data augmentation techniques. In this paper, by optimizing the parameters for BDA, we achieved exceptionally high performances in the phishing messages classification experiment, with 95.45% for Recall and 96.85% for the BA metric. Moreover, when compared with other algorithms, the proposed method demonstrated robustness against overfitting due to the class imbalance problem and exhibited minimal performance disparity between training and testing datasets.

Full article

(This article belongs to the Special Issue Machine Learning Approaches for Imbalanced Domains: Emerging Trends and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Addressing Data Scarcity in the Medical Domain: A GPT-Based Approach for Synthetic Data Generation and Feature Extraction

by

Fahim Sufi

Information 2024, 15(5), 264; https://doi.org/10.3390/info15050264 - 6 May 2024

Abstract

This research confronts the persistent challenge of data scarcity in medical machine learning by introducing a pioneering methodology that harnesses the capabilities of Generative Pre-trained Transformers (GPT). In response to the limitations posed by a dearth of labeled medical data, our approach involves

[...] Read more.

This research confronts the persistent challenge of data scarcity in medical machine learning by introducing a pioneering methodology that harnesses the capabilities of Generative Pre-trained Transformers (GPT). In response to the limitations posed by a dearth of labeled medical data, our approach involves the synthetic generation of comprehensive patient discharge messages, setting a new standard in the field with GPT autonomously generating 20 fields. Through a meticulous review of the existing literature, we systematically explore GPT’s aptitude for synthetic data generation and feature extraction, providing a robust foundation for subsequent phases of the research. The empirical demonstration showcases the transformative potential of our proposed solution, presenting over 70 patient discharge messages with synthetically generated fields, including severity and chances of hospital re-admission with justification. Moreover, the data had been deployed in a mobile solution where regression algorithms autonomously identified the correlated factors for ascertaining the severity of patients’ conditions. This study not only establishes a novel and comprehensive methodology but also contributes significantly to medical machine learning, presenting the most extensive patient discharge summaries reported in the literature. The results underscore the efficacy of GPT in overcoming data scarcity challenges and pave the way for future research to refine and expand the application of GPT in diverse medical contexts.

Full article

(This article belongs to the Special Issue Information Systems in Healthcare)

►▼

Show Figures

Figure 1

Open AccessReview

Cybercrime Intention Recognition: A Systematic Literature Review

by

Yidnekachew Worku Kassa, Joshua Isaac James and Elefelious Getachew Belay

Information 2024, 15(5), 263; https://doi.org/10.3390/info15050263 - 5 May 2024

Abstract

In this systematic literature review, we delve into the realm of intention recognition within the context of digital forensics and cybercrime. The rise of cybercrime has become a major concern for individuals, organizations, and governments worldwide. Digital forensics is a field that deals

[...] Read more.

In this systematic literature review, we delve into the realm of intention recognition within the context of digital forensics and cybercrime. The rise of cybercrime has become a major concern for individuals, organizations, and governments worldwide. Digital forensics is a field that deals with the investigation and analysis of digital evidence in order to identify, preserve, and analyze information that can be used as evidence in a court of law. Intention recognition is a subfield of artificial intelligence that deals with the identification of agents’ intentions based on their actions and change of states. In the context of cybercrime, intention recognition can be used to identify the intentions of cybercriminals and even to predict their future actions. Employing a PRISMA systematic review approach, we curated research articles from reputable journals and categorized them into three distinct modeling approaches: logic-based, classical machine learning-based, and deep learning-based. Notably, intention recognition has transcended its historical confinement to network security, now addressing critical challenges across various subdomains, including social engineering attacks, artificial intelligence black box vulnerabilities, and physical security. While deep learning emerges as the dominant paradigm, its inherent lack of transparency poses a challenge in the digital forensics landscape. However, it is imperative that models developed for digital forensics possess intrinsic attributes of explainability and logical coherence, thereby fostering judicial confidence, mitigating biases, and upholding accountability for their determinations. To this end, we advocate for hybrid solutions that blend explainability, reasonableness, efficiency, and accuracy. Furthermore, we propose the creation of a taxonomy to precisely define intention recognition, paving the way for future advancements in this pivotal field.

Full article

(This article belongs to the Special Issue Digital Forensic Investigation and Incident Response)

►▼

Show Figures

Figure 1

Open AccessArticle

Novel Ransomware Detection Exploiting Uncertainty and Calibration Quality Measures Using Deep Learning

by

Mazen Gazzan and Frederick T. Sheldon

Information 2024, 15(5), 262; https://doi.org/10.3390/info15050262 - 5 May 2024

Abstract

Ransomware poses a significant threat by encrypting files or systems demanding a ransom be paid. Early detection is essential to mitigate its impact. This paper presents an Uncertainty-Aware Dynamic Early Stopping (UA-DES) technique for optimizing Deep Belief Networks (DBNs) in ransomware detection. UA-DES

[...] Read more.

Ransomware poses a significant threat by encrypting files or systems demanding a ransom be paid. Early detection is essential to mitigate its impact. This paper presents an Uncertainty-Aware Dynamic Early Stopping (UA-DES) technique for optimizing Deep Belief Networks (DBNs) in ransomware detection. UA-DES leverages Bayesian methods, dropout techniques, and an active learning framework to dynamically adjust the number of epochs during the training of the detection model, preventing overfitting while enhancing model accuracy and reliability. Our solution takes a set of Application Programming Interfaces (APIs), representing ransomware behavior as input we call “UA-DES-DBN”. The method incorporates uncertainty and calibration quality measures, optimizing the training process for better more accurate ransomware detection. Experiments demonstrate the effectiveness of UA-DES-DBN compared to more conventional models. The proposed model improved accuracy from 94% to 98% across various input sizes, surpassing other models. UA-DES-DBN also decreased the false positive rate from 0.18 to 0.10, making it more useful in real-world cybersecurity applications.

Full article

(This article belongs to the Special Issue Novel Approaches for Information Security in Complex Cyber-Physical Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

The Impact of Immersive Virtual Reality on Knowledge Acquisition and Adolescent Perceptions in Cultural Education

by

Athanasios Christopoulos, Maria Styliou, Nikolaos Ntalas and Chrysostomos Stylios

Information 2024, 15(5), 261; https://doi.org/10.3390/info15050261 - 3 May 2024

Abstract

Understanding local history is fundamental to fostering a comprehensive global viewpoint. As technological advances shape our pedagogical tools, Virtual Reality (VR) stands out for its potential educational impact. Though its promise in educational settings is widely acknowledged, especially in science, technology, engineering and

[...] Read more.

Understanding local history is fundamental to fostering a comprehensive global viewpoint. As technological advances shape our pedagogical tools, Virtual Reality (VR) stands out for its potential educational impact. Though its promise in educational settings is widely acknowledged, especially in science, technology, engineering and mathematics (STEM) fields, there is a noticeable decrease in research exploring VR’s efficacy in arts. The present study examines the effects of VR-mediated interventions on cultural education. In greater detail, secondary school adolescents (N = 52) embarked on a journey into local history through an immersive 360° VR experience. As part of our research approach, we conducted pre- and post-intervention assessments to gauge participants’ grasp of the content and further distributed psychometric instruments to evaluate their reception of VR as an instructional approach. The analysis indicates that VR’s immersive elements enhance knowledge acquisition but the impact is modulated by the complexity of the subject matter. Additionally, the study reveals that a tailored, context-sensitive, instructional design is paramount for optimising learning outcomes and mitigating educational inequities. This work challenges the “one-size-fits-all” approach to educational VR, advocating for a more targeted instructional approach. Consequently, it emphasises the need for educators and VR developers to collaboratively tailor interventions that are both culturally and contextually relevant.

Full article

(This article belongs to the Special Issue Innovation in Education, Training and Game Design with Immersive Technologies and Spatial Computing)

►▼

Show Figures

Figure 1

Open AccessArticle

Medical Support Vehicle Location and Deployment at Mass Casualty Incidents

by

Miguel Medina-Perez, Giovanni Guzmán, Magdalena Saldana-Perez and Valeria Karina Legaria-Santiago

Information 2024, 15(5), 260; https://doi.org/10.3390/info15050260 - 3 May 2024

Abstract

Anticipating and planning for the urgent response to large-scale disasters is critical to increase the probability of survival at these events. These incidents present various challenges that complicate the response, such as unfavorable weather conditions, difficulties in accessing affected areas, and the geographical

[...] Read more.

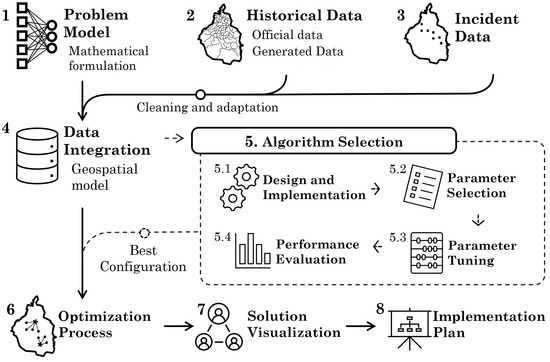

Anticipating and planning for the urgent response to large-scale disasters is critical to increase the probability of survival at these events. These incidents present various challenges that complicate the response, such as unfavorable weather conditions, difficulties in accessing affected areas, and the geographical spread of the victims. Furthermore, local socioeconomic factors, such as inadequate prevention education, limited disaster resources, and insufficient coordination between public and private emergency services, can complicate these situations. In large-scale emergencies, multiple demand points (DPs) are generally observed, which requires efforts to coordinate the strategic allocation of human and material resources in different geographical areas. Therefore, the precise management of these resources based on the specific needs of each area becomes fundamental. To address these complexities, this paper proposes a methodology that models these scenarios as a multi-objective optimization problem, focusing on the location-allocation problem of resources in Mass Casualty Incidents (MCIs). The proposed case study is Mexico City in a earthquake post-disaster scenario, using voluntary geographic information, open government data, and historical data from the 19 September 2017 earthquake. It is assumed that the resources that require optimal location and allocation are ambulances, which focus on medical issues that affect the survival of victims. The designed solution involves the use of a metaheuristic optimization technique, along with a parameter tuning technique, to find configurations that perform at different instances of the problem, i.e., different hypothetical scenarios that can be used as a reference for future possible situations. Finally, the objective is to present the different solutions graphically, accompanied by relevant information to facilitate the decision-making process of the authorities responsible for the practical implementation of these solutions.

Full article

(This article belongs to the Special Issue Telematics, GIS and Artificial Intelligence)

►▼

Show Figures

Figure 1

Open AccessArticle

Enhanced Fault Detection in Bearings Using Machine Learning and Raw Accelerometer Data: A Case Study Using the Case Western Reserve University Dataset

by

Krish Kumar Raj, Shahil Kumar, Rahul Ranjeev Kumar and Mauro Andriollo

Information 2024, 15(5), 259; https://doi.org/10.3390/info15050259 - 2 May 2024

Abstract

This study introduces a novel approach for fault classification in bearing components utilizing raw accelerometer data. By employing various neural network models, including deep learning architectures, we bypass the traditional preprocessing and feature-extraction stages, streamlining the classification process. Utilizing the Case Western Reserve

[...] Read more.

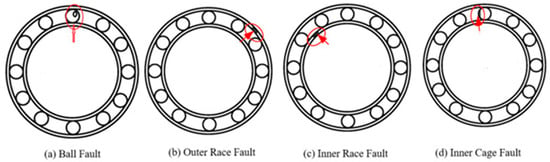

This study introduces a novel approach for fault classification in bearing components utilizing raw accelerometer data. By employing various neural network models, including deep learning architectures, we bypass the traditional preprocessing and feature-extraction stages, streamlining the classification process. Utilizing the Case Western Reserve University (CWRU) bearing dataset, our methodology demonstrates remarkable accuracy, particularly in deep learning networks such as the three variant convolutional neural networks (CNNs), achieving above 98% accuracy across various loading levels, establishing a new benchmark in fault-detection efficiency. Notably, data exploration through principal component analysis (PCA) and t-distributed stochastic neighbor embedding (t-SNE) provided valuable insights into feature relationships and patterns, aiding in effective fault detection. This research not only proves the efficacy of neural network classifiers in handling raw data but also opens avenues for more straightforward yet effective diagnostic methods in machinery health monitoring. These findings suggest significant potential for real-world applications, offering a faster yet reliable alternative to conventional fault-classification techniques.

Full article

(This article belongs to the Section Information Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

A Hybrid MCDM Approach Using the BWM and the TOPSIS for a Financial Performance-Based Evaluation of Saudi Stocks

by

Abdulrahman T. Alsanousi, Ammar Y. Alqahtani, Anas A. Makki and Majed A. Baghdadi

Information 2024, 15(5), 258; https://doi.org/10.3390/info15050258 - 2 May 2024

Abstract

This study presents a hybrid multicriteria decision-making approach for evaluating stocks in the Saudi Stock Market. The objective is to provide investors and stakeholders with a robust evaluation methodology to inform their investment decisions. With a market value of USD 2.89 trillion dollars

[...] Read more.

This study presents a hybrid multicriteria decision-making approach for evaluating stocks in the Saudi Stock Market. The objective is to provide investors and stakeholders with a robust evaluation methodology to inform their investment decisions. With a market value of USD 2.89 trillion dollars in September 2022, the Saudi Stock Market is of significant importance for the country’s economy. However, navigating the complexities of stock market performance poses investment challenges. This study employs the best–worst method and the technique for order preference by similarity to identify an ideal solution to address these challenges. Utilizing data from the Saudi Stock Market (Tadawul), this study evaluates stock performance based on financial criteria, including return on equity, return on assets, net profit margin, and asset turnover. The findings reveal valuable insights, particularly in the banking sector, which exhibited the highest net profit margin ratios among sectors. The hybrid multicriteria decision-making-based approach enhances investment decisions. This research provides a foundation for future investigations, facilitating a deeper exploration and analysis of additional aspects of the Saudi Stock Market’s performance. The developed methodology and findings have implications for investors and stakeholders, aiding their investment decisions and maximizing returns.

Full article

(This article belongs to the Special Issue New Applications in Multiple Criteria Decision Analysis II)

►▼

Show Figures

Figure 1

Open AccessArticle

FIWARE-Compatible Smart Data Models for Satellite Imagery and Flood Risk Assessment to Enhance Data Management

by

Ioannis-Omiros Kouloglou, Gerasimos Antzoulatos, Georgios Vosinakis, Francesca Lombardo, Alberto Abella, Marios Bakratsas, Anastasia Moumtzidou, Evangelos Maltezos, Ilias Gialampoukidis, Eleftherios Ouzounoglou, Stefanos Vrochidis, Angelos Amditis, Ioannis Kompatsiaris and Michele Ferri

Information 2024, 15(5), 257; https://doi.org/10.3390/info15050257 - 2 May 2024

Abstract

The increasing rate of adoption of innovative technological achievements along with the penetration of the Next Generation Internet (NGI) technologies and Artificial Intelligence (AI) in the water sector are leading to a shift to a Water-Smart Society. New challenges have emerged in terms

[...] Read more.

The increasing rate of adoption of innovative technological achievements along with the penetration of the Next Generation Internet (NGI) technologies and Artificial Intelligence (AI) in the water sector are leading to a shift to a Water-Smart Society. New challenges have emerged in terms of data interoperability, sharing, and trustworthiness due to the rapidly increasing volume of heterogeneous data generated by multiple technologies. Hence, there is a need for efficient harmonization and smart modeling of the data to foster advanced AI analytical processes, which will lead to efficient water data management. The main objective of this work is to propose two Smart Data Models focusing on the modeling of the satellite imagery data and the flood risk assessment processes. The utilization of those models reinforces the fusion and homogenization of diverse information and data, facilitating the adoption of AI technologies for flood mapping and monitoring. Furthermore, a holistic framework is developed and evaluated via qualitative and quantitative performance indicators revealing the efficacy of the proposed models concerning the usage of the models in real cases. The framework is based on the well-known and compatible technologies on NGSI-LD standards which are customized and applicable easily to support the water data management processes effectively.

Full article

(This article belongs to the Topic Recent Advances and Technologies in Emergency Response, Security and Disaster Management Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Epileptic Seizure Detection from Decomposed EEG Signal through 1D and 2D Feature Representation and Convolutional Neural Network

by

Shupta Das, Suraiya Akter Mumu, M. A. H. Akhand, Abdus Salam and Md Abdus Samad Kamal

Information 2024, 15(5), 256; https://doi.org/10.3390/info15050256 - 2 May 2024

Abstract

Electroencephalogram (EEG) has emerged as the most favorable source for recognizing brain disorders like epileptic seizure (ES) using deep learning (DL) methods. This study investigated the well-performed EEG-based ES detection method by decomposing EEG signals. Specifically, empirical mode decomposition (EMD) decomposes EEG signals

[...] Read more.

Electroencephalogram (EEG) has emerged as the most favorable source for recognizing brain disorders like epileptic seizure (ES) using deep learning (DL) methods. This study investigated the well-performed EEG-based ES detection method by decomposing EEG signals. Specifically, empirical mode decomposition (EMD) decomposes EEG signals into six intrinsic mode functions (IMFs). Three distinct features, namely, fluctuation index, variance, and ellipse area of the second order difference plot (SODP), were extracted from each of the IMFs. The feature values from all EEG channels were arranged in two composite feature forms: a 1D (i.e., unidimensional) form and a 2D image-like form. For ES recognition, the convolutional neural network (CNN), the most prominent DL model for 2D input, was considered for the 2D feature form, and a 1D version of CNN was employed for the 1D feature form. The experiment was conducted on a benchmark CHB-MIT dataset as well as a dataset prepared from the EEG signals of ES patients from Prince Hospital Khulna (PHK), Bangladesh. The 2D feature-based CNN model outperformed the other 1D feature-based models, showing an accuracy of 99.78% for CHB-MIT and 95.26% for PHK. Furthermore, the cross-dataset evaluations also showed favorable outcomes. Therefore, the proposed method with 2D composite feature form can be a promising ES detection method.

Full article

(This article belongs to the Special Issue Deep Learning for Image, Video and Signal Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

Proactive Agent Behaviour in Dynamic Distributed Constraint Optimisation Problems

by

Brighter Agyemang, Fenghui Ren and Jun Yan

Information 2024, 15(5), 255; https://doi.org/10.3390/info15050255 - 2 May 2024

Abstract

In multi-agent systems, the Dynamic Distributed Constraint Optimisation Problem (D-DCOP) framework is pivotal, allowing for the decomposition of global objectives into agent constraints. Proactive agent behaviour is crucial in such systems, enabling agents to anticipate future changes and adapt accordingly. Existing approaches, like

[...] Read more.

In multi-agent systems, the Dynamic Distributed Constraint Optimisation Problem (D-DCOP) framework is pivotal, allowing for the decomposition of global objectives into agent constraints. Proactive agent behaviour is crucial in such systems, enabling agents to anticipate future changes and adapt accordingly. Existing approaches, like Proactive Dynamic DCOP (PD-DCOP) algorithms, often necessitate a predefined environment model. We address the problem of enabling proactive agent behaviour in D-DCOPs where the dynamics model of the environment is unknown. Specifically, we propose an approach where agents learn local autoregressive models from observations, predicting future states to inform decision-making. To achieve this, we present a temporal experience-sharing message-passing algorithm that leverages dynamic agent connections and a distance metric to collate training data. Our approach outperformed baseline methods in a search-and-extinguish task using the RoboCup Rescue Simulator, achieving better total building damage. The experimental results align with prior work on the significance of decision-switching costs and demonstrate improved performance when the switching cost is combined with a learned model.

Full article

(This article belongs to the Special Issue Intelligent Agent and Multi-Agent System)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Information Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Applied Sciences, Drones, Information, Sensors

Recent Advances and Technologies in Emergency Response, Security and Disaster Management Applications

Topic Editors: Evangelos Maltezos, Eleftherios Ouzounoglou, Panagiotis Michalis, Angelos Amditis, Stefanos Vrochidis, Norman Kerle, Christos NtanosDeadline: 20 May 2024

Topic in

Algorithms, Diagnostics, Entropy, Information, J. Imaging

Application of Machine Learning in Molecular Imaging

Topic Editors: Allegra Conti, Nicola Toschi, Marianna Inglese, Andrea Duggento, Matthew Grech-Sollars, Serena Monti, Giancarlo Sportelli, Pietro CarraDeadline: 31 May 2024

Topic in

Drones, Electronics, Future Internet, Information, Mathematics

Future Internet Architecture: Difficulties and Opportunities

Topic Editors: Peiying Zhang, Haotong Cao, Keping YuDeadline: 30 June 2024

Topic in

Algorithms, Computation, Information, Mathematics

Complex Networks and Social Networks

Topic Editors: Jie Meng, Xiaowei Huang, Minghui Qian, Zhixuan XuDeadline: 31 July 2024

Conferences

Special Issues

Special Issue in

Information

AI Applications in Construction and Infrastructure

Guest Editor: Sudipta ChowdhuryDeadline: 15 May 2024

Special Issue in

Information

Health Data Information Retrieval

Guest Editors: Mario Ciampi, Mario SicuranzaDeadline: 31 May 2024

Special Issue in

Information

Text Mining: Challenges, Algorithms, Tools and Applications

Guest Editor: Fei LiuDeadline: 15 June 2024

Special Issue in

Information

Systems Engineering and Knowledge Management

Guest Editor: Vladimír BurešDeadline: 30 June 2024

Topical Collections

Topical Collection in

Information

Natural Language Processing and Applications: Challenges and Perspectives

Collection Editor: Diego Reforgiato Recupero

Topical Collection in

Information

Knowledge Graphs for Search and Recommendation

Collection Editors: Pierpaolo Basile, Annalina Caputo

Topical Collection in

Information

Augmented Reality Technologies, Systems and Applications

Collection Editors: Ramon Fabregat, Jorge Bacca-Acosta, N.D. Duque-Mendez